ASCII Generation

Understanding

Benchmark

Unlocking the Latent Canvas: Eliciting and Benchmarking Symbolic Visual Expression in LLMs

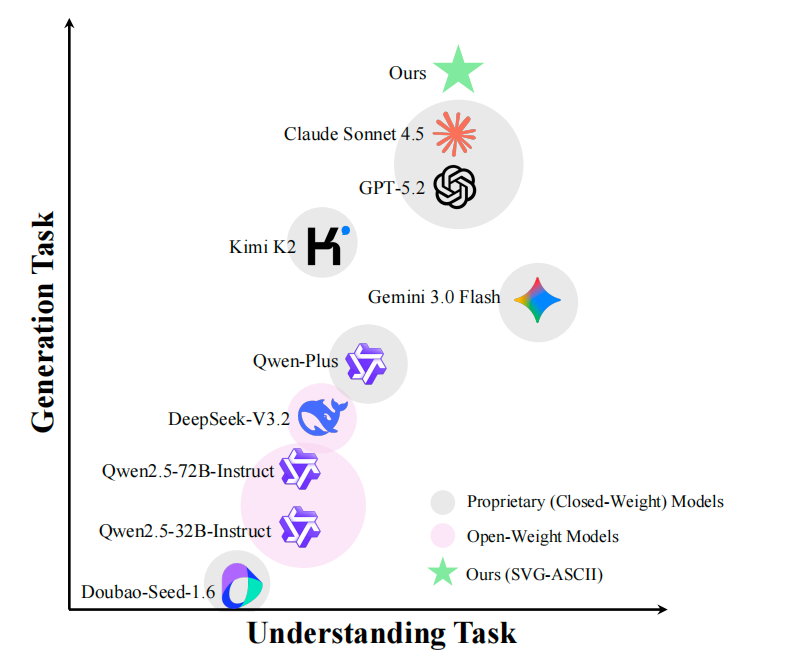

We introduce SVE-ASCII: an open 7B model (SVE-ASCII-7B), a reproducible dataset, and a standardized benchmark for symbolic visual expression via ASCII art.

What is released?

Model: SVE-ASCII-7B · Dataset: SVE-ASCII-Dataset ·

Benchmark: SVE-ASCII-Bench (Generation + Understanding)

Prefer details? See the GitHub README.

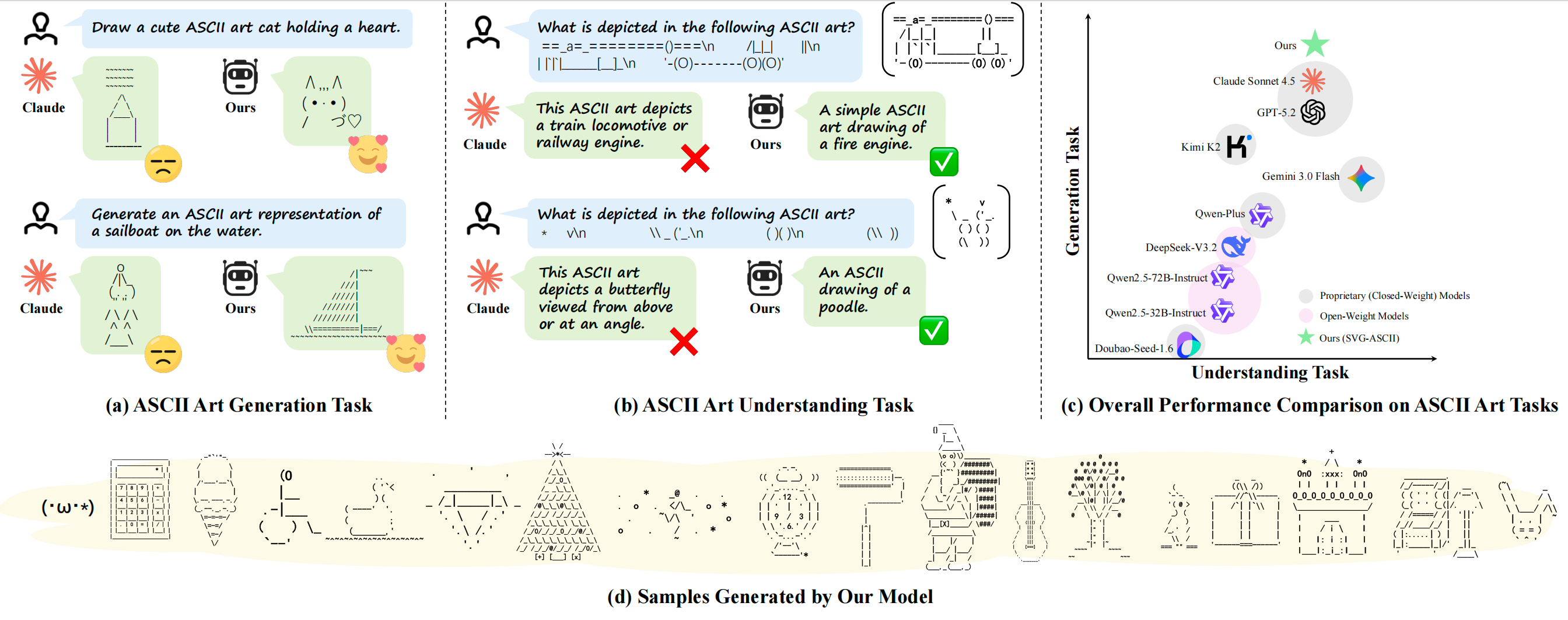

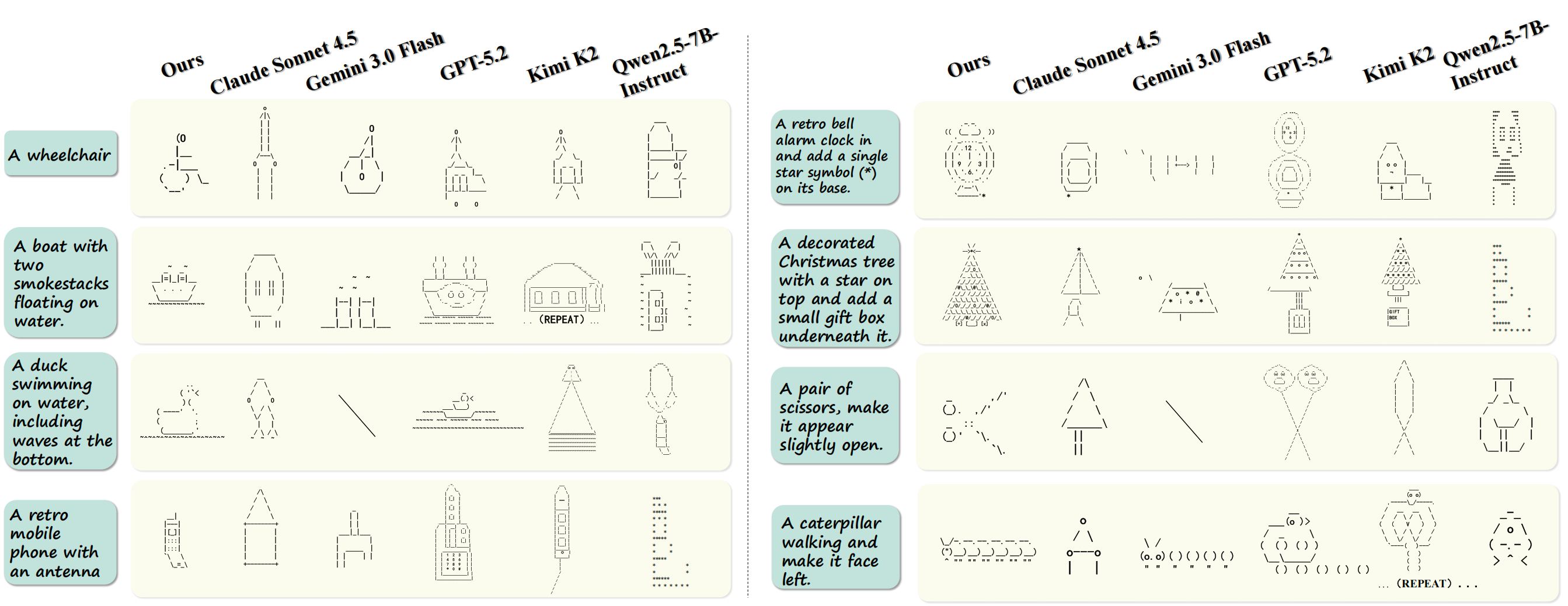

Qualitative Results

SVE-ASCII Benchmark

SVE-ASCII-Bench covers two tasks: Generation (text → ASCII art), Understanding (ASCII art → description / label), Generation uses SA / IF / SC / SL / CE and a weighted composite score on Recall (in-distribution) and Generalization (OOD) splits.

Quick Start

# Clone & download benchmark from HF

cd SVE-ASCII/benchmark

# Generation

python eval_generation.py

# Understanding

python eval_understanding.py

# Understanding-Selection

python eval_understanding_selection.py

Set

MODEL_TYPE, API keys, etc. See benchmark/README.md.

Resources